Although nowdays the phrase "AI" invariably means "huge generative models, especially

language models, trained on lots of data", the field of artificial intelligence is

actually wider, deeper, and indeed much older, then most people realise. I prefer (of

course) my definition: "AI is that which, were it to be done by a human,

would require intelligence ". I was originally inspired by AI from wanting to

understand the human mind, and asking "could we make a mind"? Although I now

believe that even if we could, it would be wrong to do so.

Over the last four decades my work has spanned all the principle areas of AI, its

junior brother data analytics and predictive analytics, as well as the complex cousins

of compilers and operating systems. I have categorised this research into seven AI or

AI-related sectors:

From a pragmatic perspective we can also regard AI as the "acquisition, manipulation,

and exploitation of knowledge". The form that knowledge takes is important, and can

be:

The following diagram illustrates my involvement in the development of the field of AI.

Definitions are dashed-underlined . Sadly some of the

work of which I am most proud is subject to strict non-disclosure restrictions, and not

discussed here.

In the 1980s, Artificial Intelligence was very much an unusual,

almost eccentric, discipline. Applying it to industrial problems, whilst not unheard of,

was rare but exciting.

Transformer Expert System

In the 80s I built an expert system to determine when critical equipment -

electrical transformers in the UK power grid - would need servicing, using measurements

which could be obtained without taking them out of use. (This was typical of the

pre-privatised Central Electricity Generating Board, a well-managed chartered industry

whose metier was to produce reliable electricity for the country, and was always

developing ways of working efficiently.) It was programed in BASIC!, and

ran on a PC with 640k address space or less, which at the time was considered a high-spec

machine.

Search Space Traversal is one of the main problems AI in particular and

computer science in general face. It comes in many guises: route finding for navigation

programs; finding the weights (or "parameters") in a Large Language Model; designing the

best electronic component layout on a circuit board; or deciding the

best chess, checkers, or Go move to play.

The essence of the problem is that there are many possible solutions, ie routes, sets

of weights, layouts, or game positions; and there are way too many to check all possible

solutions - end of the universe timescales even on supercomputers. (This is the

technique of "brute force".)

One approach would be to start with any solution, and keep trying to improve it until

you find the best. This is known as "hill climbing", we find the "best" (visualised here

as the highest) by climbing the hill, and it's what our explorer friend on the right is

trying to do.

There is, as you can see, a problem. Unless, by unfeasibly good luck, he starts on

the best hill, the top of the hill he climbs is likely to be a "local maximum": it is

higher than the rest of the local terrain, but not the highest or best of all. Hill

climbing yields quite a good solution - he is, after all, at the top of a hill - but not

the best or optimum. In some cases - route planning or training LLMs - quite good may be

good enough, but not in all.

To find a "probably best" hill our explorer must use other techniques, many of which

are used in this research at some point or other. Genetic algorithms are modelled

on how natural selection overcomes the same problem in evolution. Simulated Annealing is

modelled on how ice freezes, and which involves sometimes deliberately going downhill.

Even these techniques, which do a good job of finding good local maxima, cannot guarantee

to find the best or optimal solution.

Problems such as chess were studied and even sponsored as test-beds to develop tools,

techniques, and understanding. In a large part, this approach was succesful. But even

now we put up with sub-optimal solutions because its the best that can be done: the

word embeddings in LLMs may have, in effect, duplicate dimensions.

Chess

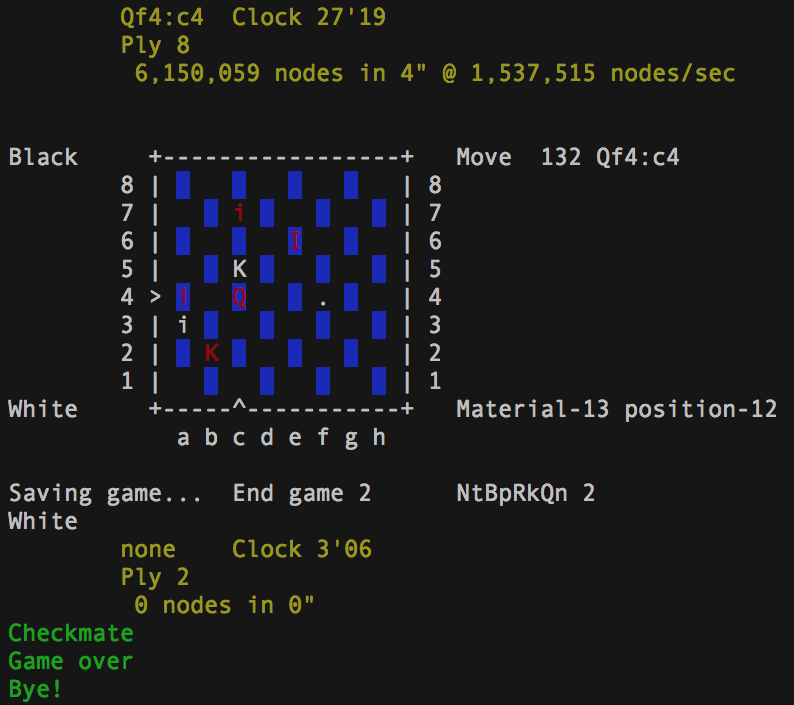

Whilst studying Computer Science at London University I wrote a chess

program in Pascal, as an exercise in recursion. Chess lives on as

Imp Chess .

Chess and similar programs exemplify the import AI problem of search-space traversal: how,

in an unfeasibly large domain of possible solutions, do we find the answer to this

problem?

(As an exercise in complex programming, every aspiring programmer should write a

chess program, a compiler , or an

operating system .)

In the early '90s it was not clear that computer

programs could do anything useful at a semantic or understanding level. And a document

could easily be too big to load into RAM. None the less,

we had a go...

Multi-Lingual Summariser

I believe I wrote the first industrial-grade multi-lingual text

and html summariser. It:

used a technique I developed called Discussion Flow Analysis ("DFA") on a

tree-representation of the parsed text. DFA endeavours to produce a summary of n

sentences which, when read together, have a discussion flow as similar as possible to the

original document, so it is better than just choosing the “best” n sentences. (A

heuristic avoids the combinational explosion the pure technique would produce.)

can even evaluate summarise tables (keeping only the relevant rows or columns)

understood images from their context

was extremely fast, so that summaries could be shrunk and grown in real time by means

of buttons. It achieved this partly by having two internal phases: an analysis phase,

performed once, and a synthesis phase, performed when one of the buttons was pressed

worked in an environment of scarse RAM: it was typically not possible to load an

entire document into memory, and this was another reason for structuring the analysis

into passes, like a compiler

won an industry award, and was licenced by IBM. (Who were, by the way, a delight to

work with, and always behaved with impecable integrity.)

is multi-lingual, even understanding languages such as Japanese, which according to

Japanese speakers were perfectly respectable summaries. Watching a

program you wrote summarise a language you don’t speak remains a surreal experience even

now, let alone in the 1990s.

was able to focus on a particular query, which was particularly useful when used

alongside text retrieval

A client tested the summariser, asking both it and ten people to summarise ten

documents. Each person then scored each of the other summaries. They discovered that

where people could agree on what constituted a good summary, the summariser did well; but

where people could not agree, the summariser did not do so well. This interesting

result hints that some documents are intrinsically summarisable, whilst others are not.

Unlike Large Language Models, this technique is incapable of "hallucinating"

(aka "not working properly", "generating errors", or "just making it up") and therefore

is still preferable in any environment where welfare is at issue. A

python implementation exists today.

One of our developments was to add knowledge

to language understanding. There was no vast repository of internet knowledge available -

there was no internet(!) - so explicitly captured knowledge was used. Arguably this is

better anyway, since this knowledge would reflect the user's perspective.

Semantic Text Retrieval

Inquisitor was a multi-lingual knowledge-based text retrieval engine,

also licensed by IBM. As with the summariser, it incrementally grew its knowledge base,

so that its understanding of the meaning of significant terms was the same as the reader's,

significantly boosting its performance. By allowing those explanations of terms to span

languages, Inquisitor could search across language.

Classification is a fundamental task of AI, which

is now routine - you probably use it as a spam filter.

News Classification

Similar programs, using inducted or curated knowledge, could analyse and then classify

or filter text.

With a news publisher we conducted a quantitative golden-set study on the domain of

news stories, with our AI trained with supervised learning .

It revealed that whilst the AI is more accurate than people it is less flexible,

unable to recognise exceptions, and more likely when wrong to be dramatically wrong.

This characteristic of trained AI is still apparent in recent large language models,

which are often confidently wrong.

Classification techniques could reduce the amount of text to train an LLM,

and to keep the quality high.

An innovative application of AI is to reduce work

load, applying human knowledge to human interactions.

Automatic e-mail responder: AutoAnswer

I built AutoAnswer for automatic e-mail responding for help-desks. When it received an

email it would:

See if this e-mail was a response to an earlier of its own answers, in which case

it would forward it on to a human being, on the basis that its own answer had not

been fully satisfactory.

Use sentiment analysis to see if the sender was angry, and also in this case send

it on to a human being, on the basis that accidentally upsetting this sender

might loose a customer.

Use its knowledge base and natural language understanding to see if this was a

question it understood and could answer, in which case it would go ahead and

answer. In a major deployment, this outcome was about 80% of cases, so it reduced

the work-load by a factor of five.

The final case is where it doesn't recognise the question, so it would be sent on

to a human being, who would not only answer the original correspondant but, since

this question and answer is clearly missing from the knowledge base, add it

to the knowledge base.

The challenge was not the sentiment analysis (which was working well in the

2000s), but in finding a knowledge representation which was both powerful (and so could be

applied to a wide range of queries) and accurate, yet simple enough to be written by

domain experts who were not knowledge engineers. This challenge was successfully met, as

AutoAnswer handled 80% of incoming queries for a large help-desk organisation. This

is an effective deployment approach for AI: don't ask it to do everything, just let it

reduce the work-load, in this case by a factor of five.

Computer systems were able to stitch together simple replies, filling in

the slots of sentences, but could we build a more flexible language synthesis, full of

variety but with carefully constrained meaning? And to do so with a system which is not

hard to program?

Generative Grammars

Auto answer also contained a generative language system. Unlike an LLM or n-gram

generative system, this used a generative grammar , which gives far tighter control

on the text generated, so there is no possibility of, for example, accidentally promising

wonderful offers to customers to which one is then contractually bound. A grammar allows

a class of text to be specified, and then an intended combinational explosion ensures that

the text that is generated comes from an astronomically huge population of

specification-conforming texts. Or, of course, vast numbers of conforming text samples

can be generated if that is required for training another system.

This approach (also used in the Virtual Astronut Mars Lander) can be

extended with reasoning and memory capability to make the text generation anything other

than simplistic.

This generative grammar is an excellent way to generate training text for

a neural net learning system. I used it that way many years later in training a neural

net intent recogniser. As a bonus, since it is a text generator , the text does not

have to be stored before being used for training, but can be generated and even

regenerated on the fly. If needed, state can be recorded, and the same text

regenerated.

At times, this type of language generation may still be more appropriate an

LLM, especially when we want to very tightly constrain the import of what is said.

In the 20th century almost all natural language

representation was in the form of text, whether it be inverted text indexes or n-gram

statistics. A few companies were pioneering alternative, non-textual, language

representations .

Non textual language knowledge: Bullets

Bullets is a form of semantic language representation amenable to extremely fast

manipulation:

Representation: A bullet is a one-way mappings from text (and potentially images) to

overloaded bit-signatures, where that mapping is itself a learnt optimisation. Becuase

the representation is one-way - text to bullet is possible, but bullet to text is

ambiguous - they are intrinsically secure.

Manipulation: Bullets can be 'added' to summarise a set of documents, or multiplied to

yield the cross-relevance of one bullet (perhaps representing a query) to an index of

bullets. These manipulations are extremely fast, partly because of the optimisation

during the learning-the-mapping process. It was one of the few circumstances where

dropping from Pascal or C to assembler was worth-while, because in that way an

'irrelevant' candidate bullet (when doing a search) could be disposed of in as few as

four machine instructions.

I have recently been using a bullets-approach in calculating a form of

word-embeddings used in Large Language Models. Because they are

optimised, they are extremely fast, saving CPU. Because they are lossy, they are

extremely compact, saving RAM; but as that optimisation is a semantic one, the

"information" lost is anyway redundant and not helpful in calculating the embeddings. In

other words, useless "information" is dropped early on in the pipeline.

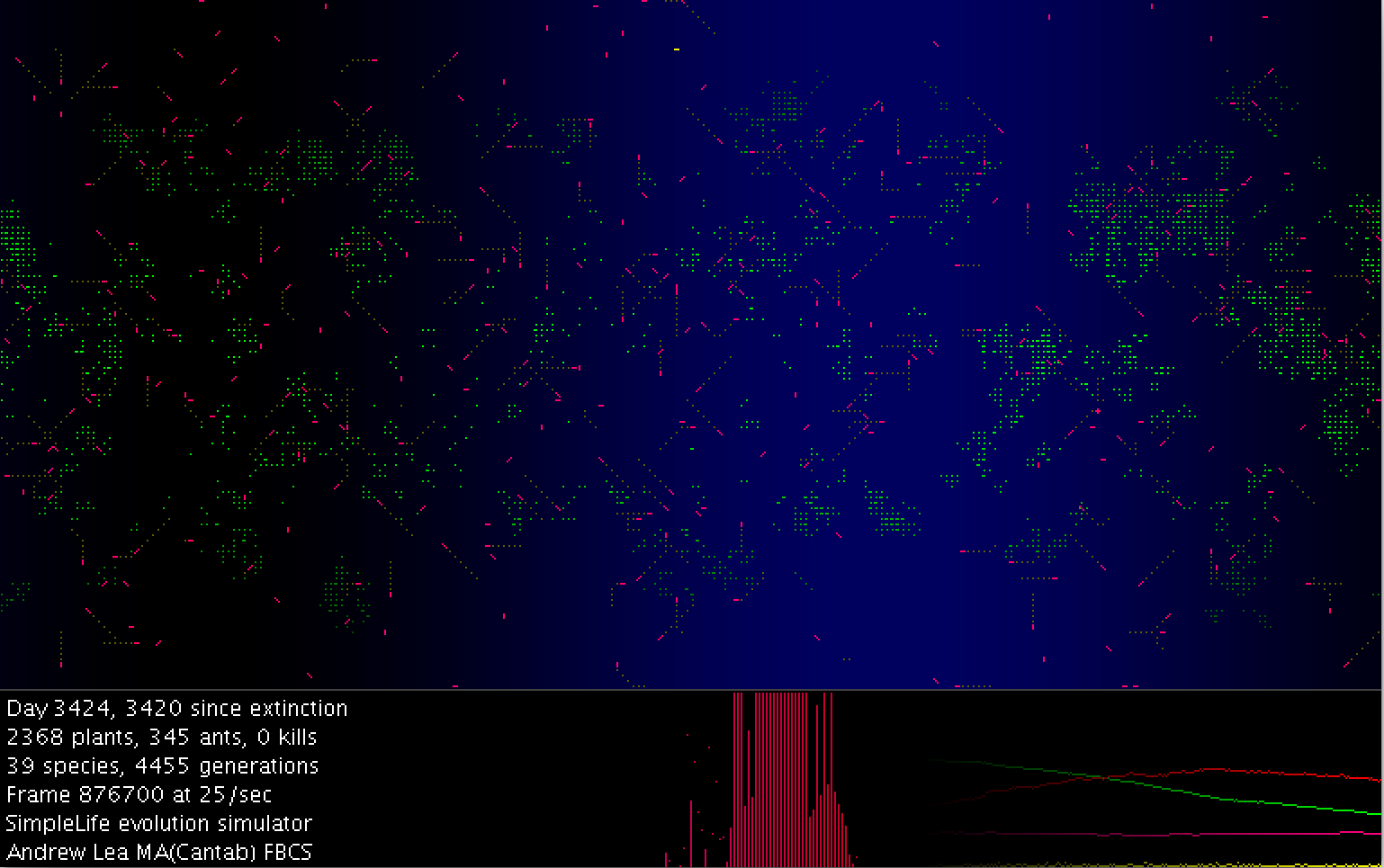

Biology can inspire AI .

In the pre-spacecraft but photographic era it was thought that earlier drawings of

'spokes' in Saturn's rings or craters on the surface of Mars were imagined. But when

spacecraft eventually visited those planets, it turned out that the human eye had been

doing an excellent job of capturing the fragmentary moments of diffraction limited

'seeing', when the atmosphere momentarily cooperated.

Active Imaging

In the image domain I wrote an astro-photography program, Active

Imaging, which produces sharp photos from the fuzzy video of a telescope web-cam.

Deliberately modelled on the human eye-brain system, by selecting momentary “sharp”

fragments, it assembles an over-all sharp image. Active Imaging can also assemble a

bigger picture, for example if a telescope is left in a fixed position, so the image of

the moon scans across the camera frame due to the rotation of the earth.

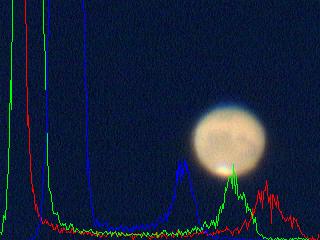

The image on the left is one of the input images, from a video stream, with the

relative strengths of each channel superimposed.

On the right is the active image, which shows far more detail.

(For astronomers: an active image is not simply a stacked image, even though

stacking does indeed reduce noise by the square root of the number of frames. An active

image stacks only the 'best' parts of each image, in the same way as an eye, and thus

can capture the momentary diffraction-limited fragments that appear from time to time.)

Active Imaging is still an effective way of constructing diffraction-limited

images and unlike other techniques in use, needs neither a bright guide-star nor an

artificial laser-star. I would still welcome a professional observatory contacting me,

as I believe Active Imaging would be a powerful tool to add to their inventory.

Agentic AI acts as an agent on behalf

of the user, typically ferriting out useful information, and distilling it. We developed

and deployed this approach at the turn of the century.

Aegentic AI: Intelligent Directed Spiders

The "Concept Engine" must have been one of the very first AI agents or intelligent

directed spiders (hence the picture!).

The user would start it by carefully defining the research he or she wanted carried out,

by means of a series of natural language prompts.

The Concept Engine would then research the web, traversing from one web page to another

by means of the hyper-link, climbing the hill of relevance to more and more useful

content. It ran multiple search queues, each with slightly different but of course

related semantic emphasis, to avoid being trapped in local minima. It was able to

interrogate web sites, understanding query syntax on the fly, and could therefore access

the dark web as well.

It would then assemble the information it had found, summarising relevant content (with

the summariser) to produce a comprehensive report which linked back to the original

sources. (It is harder for LLMs to explain where an item of knowledge came from, as their

learnt knowledge is distributed across all the parameters, rather than being localised.)

The Concept Engine was therefore capable of explanation, a valuable characteristic in AI.

We pushed the barriers of AI into analysing and

understanding what it could see using semantic image segmentation .

IOP 360

Intelligent Observation Point 360 can analyse, describe, or take

action on images or video. It reduces the image to a set of components, whose

inter-relationships combined with perspective can then be used to deduce what is being

looked at, or from video frame differences, what is happening.

Under the hood, it uses a fast method of segmenting the image to meaningful components

(ie they can be annotated and would be verified as meaningful by a person) from which

it can deduce a hierarchical description of the scene and from that can deduce what is

being looked at. I do not believe this technique is used anywhere else.

Back in 2000 few would think of putting AI in a Mars lander ,

yet we did, and at the time it was some of the most advanced AI around, and

its unique language analysis technique arguably still is...

Virtual Astronaut

At the turn of the century at Aerospace Scientific we developed the

Virtual Astronaut, which would have been uploaded to Beagle-II on Mars had it landed

successfully. The aim was for people to e-mail the lander which would understand what it

could see and sense through cameras and instruments, read their e-mail, and reply

meaningfully.

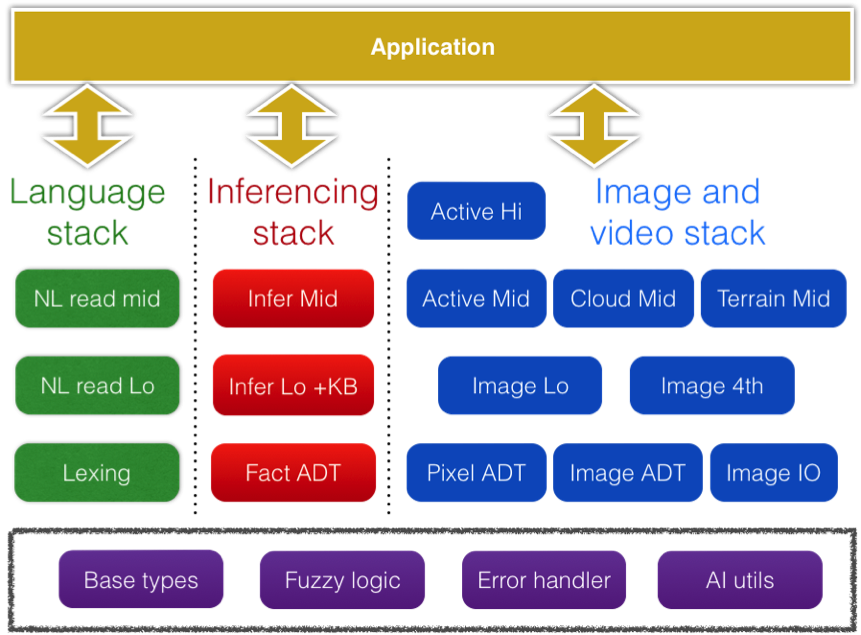

The Virtual Astronaut works by reducing all incoming information – including camera

images and language from the e-mail – to sets of assertions, and the architecture is

illustrated on the right. Specialist recognisers were

used for different media types, with language being understood by, primarily, an

Inferencing system. A multi-stage forward-chaining inference engine deduced both

intermediate assertions (of different lifetimes), frames, and second stage rules, which

were then invoked to produce output assertions, which a generative grammar used to write

the reply.

This method of unifying image, data, and language to a common representation

is very effective. It still works and is a fascinating demo. Unlike the LLM knowledge

representation - essentially patterns of language - this technique decodes language into

an explicit semantic representation, which could still be useful.

I re-implemented the Virtual Astronaut in a micro-controller for model rockets,

aircraft, and drones so that people can fly and communicate with a version of themselves,

which will react as they would.

For many years, people hypothesised that real AI

would be achieved when it was big enough. So a natural question: just how small can AI

be?...

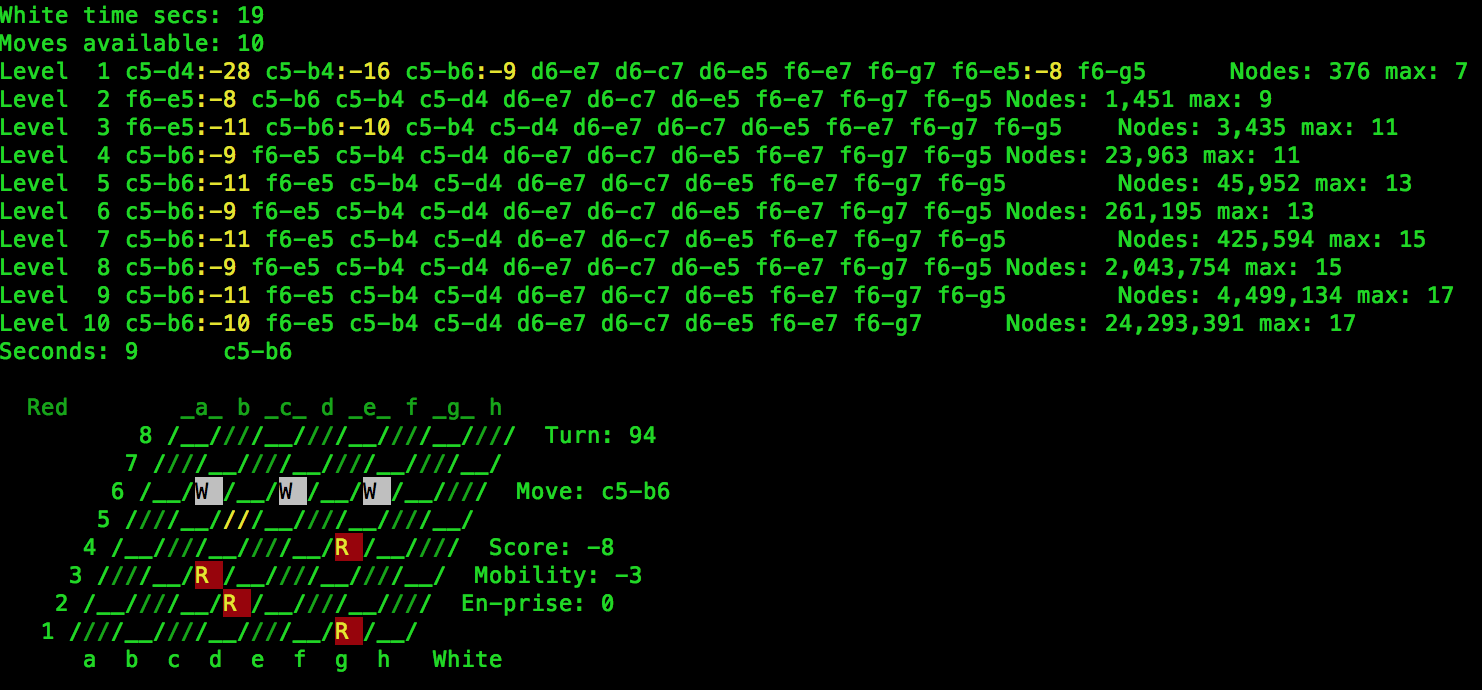

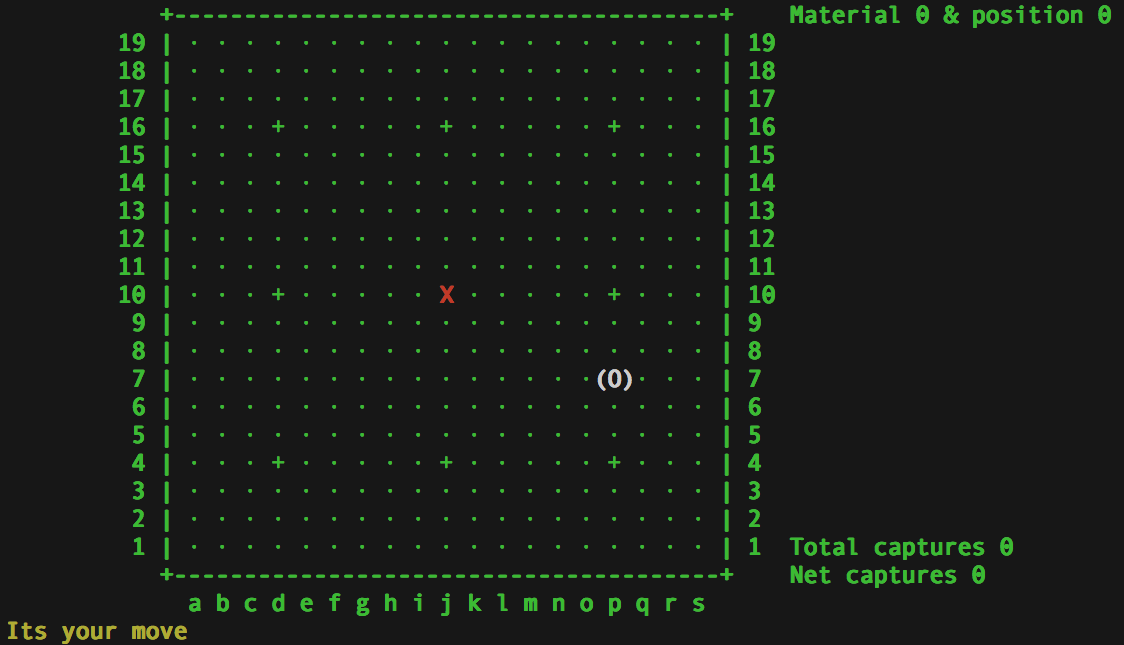

Imp Draughts

Imp Draughts was a tiny, but full, draughts program which can play

a 'good' game of draughts running on an eight bit 16 mHz micro-controller with 2 k of RAM,

partly written to show that AI is about smart, not big. Since it has to do so much with

so little, Imp Draughts demanded high performance algorithms. It and the later

Imp Chess yielded a surprising insight, explored

later on...

Meanwhile, we hope you admire the amazing 3D graphics of Imp Draughts when it is

running on a laptop.

"Explainability is the AI equivalence of

accountability." It makes no sense to hold "AI" responsible for its actions, but it does

make sense to hold either the developers or the user's to account for mis-use, and to do

this we need explainability. Not just in applications where welfare

can be at stake, but also where we need to understand why just to be confident in

results.

Satellite Image Understanding

We subsequently built a Satellite Image Understanding proof of concept to quickly

analyse satellite images, intended for slow space-based hardware.

This functional requirements were it should:

run in the very constrained CPU and RAM of space-hardened hardware, AND

run quickly (within 14 seconds over station constrained by a low orbit)

apply rules which are both powerful and easy for a domain expert to write

detect and distinguish between (for example) clouds, snow, and sea pollution

be able to explain both textually and visually why it thinks this part of the

image is an oil slick

We (Aerospace Scientific) achieved this with a layered knowledge base, which reads a

bit like FORTH programming, with a similar 'incrementally build up the

concepts' paradigm. The lowest level was couched in terms of image processing, the mid

level mapped those concepts to high level image concepts, and the top level (written by

the domain expert) expressed the conditions under which snow, cloud, or pollution would

be seen.

We also investigated a real-time image analysing

system to find safe places to land on Mercury, which at the time meant landing on unknown

territory. This is hard because there is no

atmosphere, so its "rockets all the way down", so the heavy weight of a power supply big

enough to run a radar altimeter is out. We solved this (in the lab) by using image

understanding to calculate altitude and so forth by rate of change of rate of change of

view with rocket firing, along with image analysis of a "safe place to land", whilst

optimising the trajectory to find a route with the highest probability of safe landing.

Simples.

Can AI yield benefits to humanity? Many of the techniques already

discussed clearly can, but the next one is my favourite on-going research...

Prosthetic Senses: Hearing

Many people are, or become, deaf or hard of hearing. I am developing devices which

render the spoken voice as patterns (which can be learnt to correspond to language),

either visual or vibrations. It works by visually (or through vibrations, which

calculations indicate are just feasible) presenting the formants of which speech syllables

are composed, extracting them digitally through the Hartley transform or in analog through

resonant circuits.

Prosthetic Senses: Sight

Similarly many people are, or become, blind or partially so. I am developing a series

of wearable AI devices which give a blind person more information about their environment.

These combine:

sensors (i) ultra-sound or (ii) machine vision with cameras or (iii) light

sensors; with

AI recognisers of the environment from those senses, such as image recognition and

optical flow in the case of cameras; and with

renderers which portray the detected environment through (a) voice descriptions

(b) sound or (c) vibration, which is particularly helpful to those who are also

deaf.

The most promising approach is, in effect, to give people the echo location used by

dolphins, which are, after all, close-ish relatives. It is amazing how quickly the human

brain can integrate a new sense. In this approach the AI is working in harmony with

people, rather than instead of.

The resource-use of large Large Language Models, such as energy and

water for cooling, is a serious matter because of their environmental impact. Their

accuracy too, as probablistic generators, can be questionable. Are there other

approaches?

Language Reasoning Model

This work continues that started with the conversation analysis, itself an early

LLM .

The aim is to combine the undoubted language abilities of

LRMs (or in this case a two-level model) with overt, explicit, and explainable reasoning.

Current work uses, amongst other novel techniques, semantic word embeddings...

LLMs represent "words" as vectors of approximately 1,000 numbers.

By subtracting the vector for "man" from the vector for "king" and adding the vector for

"woman" you get the vector for "queen". However, each dimension (or position in the

vector) does not actually correspond to any meaning. Given that some human languages,

albeit artificial ones, can get by with only 200 or so words, it seems plausible that

there are two many dimensions, and they are simply too big.

Embeddings

The embeddings approach I am developing, where each embedding is an unsigned integer,

has several interesting propterties...

words which have similar meanings have similar values

as bits are dropped (off the right hand side), the now smaller number identifies the

cluster to which that pair, or quad, or oct, ... of words belongs to. In other words,

the semantic relationships of a word is progressively generalised as the bit representation

is truncated.

This can be computed, even for a large corpus, relatively quickly by using two earlier

techniques discussed here:

A Bullets like approach, so that data structures which, whilst

still capturing all the needed information, are comparatively very small;

A technique similar to deterministic clustering to determine

relationships (preserved in those data structures);

Using the Order N optimisation for speed;

The upshot is... fast computation of semantically rich embeddings.

I am also working on a technique for more "traditional" word embeddings in which

each dimension of the vector is semantically meaningful to a person.

Large Language Models are making a huge impact, but they are not the only

disruptive AI going to change society, though they are currently in pole position. LLMs

understand language and other unstructured data like images, but the next technology

understands, well, numbers...

Symbolic Regression

Symbolic Regression endeavours to find the numerical relationships between numbers.

For example, given tables of forces, accelerations, and massesses it would deduce the

formula F = m x a. In other words, Symbolic Regression can be used to uncover the laws

of physics, biology, aeronautics, astronomy...

The implications are - or will be - huge in terms of revolutionising scientific and

technological progress.

The implementation I am working on is fast and powerful. It can explain what it finds,

both as equations or as, for example, usable C code.

AI is making its way into many arenas, some of which are safety-critical,

like aviation.

Aviation

Working with professional flight instructors, I have been looking into applying AI on

full-scale commercial flight simulators. This is a family of opportunities, some of

which are:

AI flight training . A two stage process. Firstly the AI learns from an

instructor what good or bad executions of a manoeuvre look like, and secondly that

trained AI monitors the performance of students as they subsequently perform the

same manoeuvre giving helpful feedback. There are many obvious objections and

non-obvious difficulties to this which we have thought through and mitigated or

eliminated. It should be both cost-effective and enable students to practice

"privately", working at skills without being constantly assessed.

AI Flight Dynamic Models . Simply put, we believe we have a better way of

creating accurate flight dynamic models for simulators, especially for new

aircraft.

Collective Wisdom Flight Advice for training and safety. This should make

for safer flying.

Real-time AI co-pilot . To tell pilots things they need to know, which they

didn't (at that point) know they needed to know! The essence is to have an AI

co-pilot who understands the situation (being a pilot) and can therefore provide

advice. This system is prototyped and flyable. (A note on trade-marks: we use

the term "co-pilot" here as a term-of-the-art exactly because this software IS a

co-pilot. Please do not get it confused with a major product with the same name.

We are calling the project "Flight Crew".) There is a screen-shot of the below,

with the

latest comment at the top...

Later versions of this are exploring advanced flight visualisations, which

integrate both vertical, horizontal and navigational displays to provided

quick-to-assimilate situational awareness.

I have not disclosed much here because we believe this is commercially

viable, and would invite any flight simulator or aircraft manufacturer to

contact us .

Please have a read of the aviation article for

more insight on safe AI in aviation.

Until recently, all general-purpose programming languages, imperative,

functional, OO, or multi-paradigm, instruct the computer as to the operations required to

solve the problem. Most languages transform higher-level abstractions to low-level

operations, but the algorithm was always specified.

declarative programming language with exact specifications.

General Purpose Declarative Programming Language

The research objective is to implement a general purpose declarative language, or

extension to an existing language, so that the programmer only has to specify what needs

to be accomplished: the system would invent the algorithm and steps required.

For example, suppose our C library lacked cube roots. A declarative extension, with new

keyword such, might read:

float CubeRoot ( float x )

{ Return ( y such y*y*y == x ) }

Here CubeRoot has an input (it takes a float x) and an output (it returns float y, such

that y3 = x) specification. The declarative language pre-processor would

invent the function body (using selection, iteration, and recursion as needed), so that it

does indeed return 3 √x. The code necessary to make an input→output

transformation (here numbers to their cube root) is referred to as an invented solution.

Conceivably, invented solutions could themselves be parameterised (perhaps using

meta-programming or template techniques), and present a family of solutions.

The fundamental AI technique has been de-risked: for a specific case, it has

successfully invented an algorithm to solve a real-world problem. (In fact, it provided

an optimum solution where I thought no solution existed at all.) It used general-purpose

inventive techniques, without special-case knowledge. An early enhancement would be the

ability to automatically factor a problem into sub-problems, each also described by the

same input→output specification.

It may be desirable to include target resource limits that, ideally, a solution should

have. (Eg maximum RAM use or running time; or minimum "accuracy".) The system could know

that until these targets were hit, it should not regard the function as sufficiently

solved.

A subroutine library would be used to avoid re-solving the same sub-problem for each

cycle of development, or save CPU resources across a team. As each subroutine must have

an input→output description, it would be easy to index, retrieve and re-use solutions.

This library could store:-

System invented solutions to programmer specified problems;

System invented solutions to system factored sub-problems;

Programmer solutions to fully input→output specified problems (ie sub-routines);

Fully specified sub-routines embodying known solutions, (eg trig or Fourier

transforms)

The main disadvantages are:

the invention phase is likely to be extremely CPU intensive, though not on the

scale of training LLMs;

how the invented code works will often be unintelligible although

what it does will be clear - it will accomplish the specification.

An out-of-scope extension might be to employ natural language generation techniques to

describe the invented algorithm.

Potential advantages include:

substantial programmer productivity gain;

increased reliability through generating verification and test code from the

input→output specifications;

the ability to mix with “hand-written” code; and

some intractable problems may prove to have solutions.